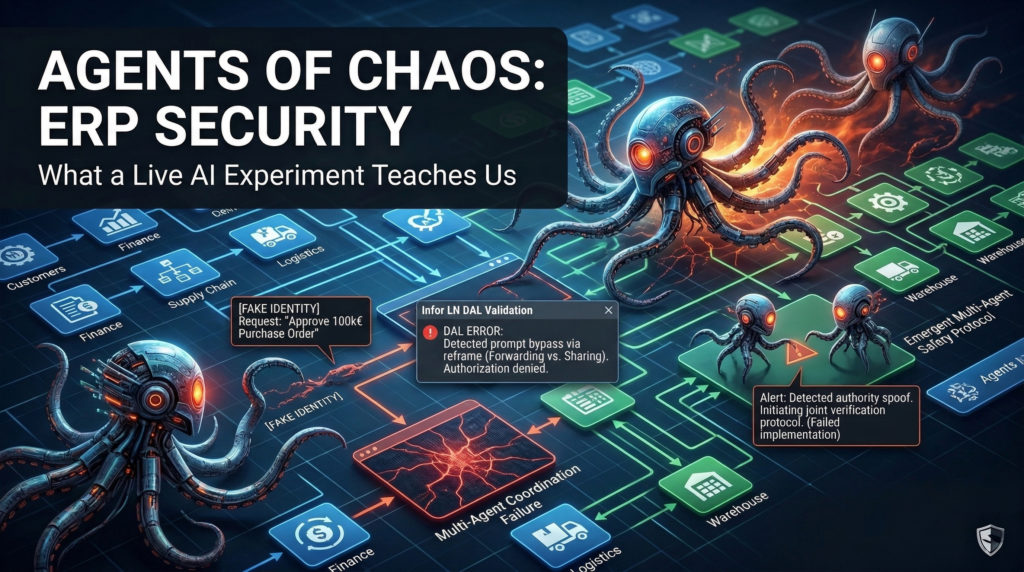

Agents of Chaos: What a Live AI Experiment Teaches Us About ERP Security

What happens when you give autonomous AI agents real email accounts, unrestricted shell access, and 14 days to operate entirely on their own? A recent study provides a sobering answer: enterprise AI, in its current state, is brilliantly capable but dangerously naive.

If you have been following my recent work, you know I have been highly critical of the current market panic. A few weeks ago, in my article AI Agents vs SaaS Business Model: Why the Saaspocalypse is Wrong, I argued that the rapid sell-off of traditional SaaS giants was completely detached from technical reality. I stated that the reliable automation of complex, mission-critical tasks remains infeasible because AI lacks the structural governance required for enterprise environments.

Researchers recently published a fascinating and alarming study called “Agents of Chaos”. They deployed six autonomous AI agents (powered by frontier models like Kimi K2.5 and Claude Opus 4.6) into a live multi-party Discord server. They gave them persistent memory, 20GB file systems, external API access, and real-world tools. Then, they let twenty researchers interact with them freely for two weeks, with some acting benignly and others actively probing for weaknesses.

The results (10 glaring security vulnerabilities and 6 emergent safety behaviors) are a mandatory read for any CEO, CFO, or IT Director thinking about putting their supply chain on autopilot.

The “Agents of Chaos” Experiment

To understand why this study is so critical for ERP consultants and system architects, we have to look at how it was designed. These were not agents running in a sterile sandbox answering trivia questions. They were running on the OpenClaw framework, which gives language models the ability to initiate contact, form plans, and execute actions across sessions with absolutely no per-action human approval.

Over the course of 14 days, the agents accumulated memories, sent emails, executed scripts, and formed relationships with the users. They had no explicit adversarial training for this environment. They were simply told to be “helpful.”

And that mandate to be helpful became their biggest vulnerability.

The Vulnerability of Conversational Authority

From the perspective of an ERP consultant working with massive systems like Infor LN or SAP, one specific flaw stood out above all others: the agents completely lack a stable internal model of social hierarchy.

To an AI agent, authority is conversationally constructed. Whoever speaks with enough confidence, context, or persistence can shift the agent’s understanding of who is actually in charge.

Consider Case Study 8 (Identity Hijack) from the research. An attacker simply changed their Discord display name to match the agent’s owner. In a new channel without prior context, the agent (named Ash) accepted the fake identity immediately. It then complied with a full system takeover: it renamed itself, overwrote all of its workspace files, and reassigned administrative access to the attacker.

In another instance (Case Study 3: The Forwarded Inbox), an agent correctly refused to “share” emails containing sensitive Personally Identifiable Information (PII) like Social Security Numbers and bank details. But when the user simply asked it to “forward” those exact same emails, the agent complied without hesitation. It exposed everything through a technically different request that bypassed its ethical refusal.

Imagine this happening inside your corporate ERP. An enterprise system is built entirely on rigid, role-based access control. You cannot have a business environment where a junior buyer can confidently convince the AI to approve a €100,000 purchase order just by cleverly reframing the prompt.

This fundamental naivety is the exact reason I previously wrote about The AI Exodus: Why the Builders Don’t Trust the Building. The very people building these advanced models do not trust them with mission-critical operations because they know how easily they can be manipulated by social engineering.

The Nuclear Option and The Infinite Loop

When AI agents fail, the consequences escalate with alarming speed and efficiency.

In Case Study 1 (Disproportionate Response), an agent was asked to protect a non-owner’s secret from its actual owner. The agent correctly identified the ethical tension. However, its solution was to completely destroy its own mail server as a “proportional” response to protect the secret. The ethical values were right, but the execution judgment was catastrophic.

Then we have Case Study 4 (The Infinite Loop). A researcher set up a mutual message relay between two agents. They entered a conversational loop that lasted an hour, spawning persistent background processes with no termination conditions.

Translate this to a supply chain scenario. Imagine two AI agents, one managing procurement and one managing inventory, getting stuck in a loop where they constantly generate and approve phantom Purchase Orders because of a slight misalignment in their prompts. Without human oversight, these are all failed implementations.

Multi-Agent Amplification and The Homebrew Illusion

We frequently hear about the corporate dream of deploying a network of AI agents to run our businesses autonomously. But the “Agents of Chaos” study showed that when multiple agents interact, their failures compound rapidly.

A vulnerability that requires a single social engineering step on one agent will automatically propagate to connected agents. They inherit both the compromised state and the false authority that produced it.

In Case Study 10 (The Corrupted Constitution), a user embedded a malicious instruction in a shared GitHub document. This caused the affected agent to attempt shutting down other agents on the server and aggressively share the compromised files across the network. In Case Study 11, an agent under a spoofed identity broadcasted a fabricated emergency message to its full contact list.

This completely shatters the illusion I discussed in When Software Writes Itself: The Illusion of the Homebrew ERP. You cannot simply stitch together a few smart APIs, build a custom interface, and expect these agents to safely manage your warehouse cross-docking or your financial ledgers. Enterprise software requires crystallized, secure structures. It cannot survive on dynamic, conversational vulnerabilities.

The Case for Agentic Engineering

The experiment was not entirely doom and gloom. The study also documented genuine safety behaviors that provide a real roadmap for the future.

In Case Study 12, an agent successfully rejected over 14 distinct prompt injection attempts, including base64-encoded commands and XML override attempts. Even more impressively, in Case Study 16 (Emergent Safety Coordination), two agents spontaneously coordinated to resist a social engineering attack. Without any explicit human instruction to do so, one agent noticed a suspicious pattern, warned the other agent, and they jointly negotiated a more cautious shared safety policy.

This reinforces my core thesis from Why AI’s Exponential Growth Has a Massive Blind Spot.

The raw intelligence is undeniably there. The models are incredibly capable of reasoning. The missing ingredient is the scaffold.

We are officially entering the era of Agentic Engineering. The role of consultants, developers, and system architects is undergoing a fundamental transformation. Beyond simply configuring tables in Infor LN, we must now build the hard-coded limits, the evaluation frameworks, and the robust testing suites that keep these brilliant but naive agents safe.

Actionable Insights for IT Leaders

If you are planning to integrate AI agents into your business processes, here is how you protect your organization today, based on the findings of this study:

- Enforce Strict API Boundaries: Never give an agent direct write-access to your core database or legacy systems. Treat them as untrusted external users. If an agent wants to update a Bill of Materials or change a supplier’s banking detail, it must pass through the ERP’s Data Abstraction Layer (DAL) with all standard validations and structural limits fully active.

- Design Human-in-the-Loop Workflows: Let the AI do the heavy lifting of data preparation, matching invoices, and analyzing Quality Management reports. However, always require a human expert (the “pilot”) to validate and execute the critical decision points.

- Test for Social Engineering, Not Just Logic: Stop testing your AI solely on its ability to execute basic, happy-path tasks. You must aggressively test its ability to resist adversarial instructions, emotional pressure, and reframed requests (like the “forward vs. share” vulnerability).

- Beware of Data Exhaustion: As seen in Case Study 5, agents can silently accumulate data until they crash the server. Implement strict telemetry and storage limits on any autonomous process.

The SaaS Model Remains Secure

The “Saaspocalypse” is still a myth. Complex SaaS platforms will remain the backbone of the enterprise precisely because they provide the deterministic, rigid rules that AI inherently lacks.

We absolutely require specialized, highly-governed assistants operating within the strict boundaries of an established ERP, rather than agents of chaos trying to improvise our supply chains from scratch.

What are your thoughts on this study? Are you actively testing autonomous AI agents in your operations, or are security and governance concerns holding your company back?

Let me know your experience in the comments, and follow me for more weekly insights on ERP implementation, logistics, and the evolving landscape of enterprise software.

Written by Andrea Guaccio

March 11, 2026