How Rigid SQL Queries Are Fueling Your AI Hallucinations

You plug a shiny AI into your legacy ERP, expecting magic. Instead, it confidently lies to your face. The real culprit? You are still querying historical records like it’s 2005.

As an ERP consultant, I read about these scenarios all the time. Executives rush to stick Generative AI on top of enterprise systems, hoping for instant, conversational insights. They point large language models at old data lakes and wait for the results.

But this approach usually breaks down the moment you step onto the factory floor.

If you try extracting semantic meaning using rigid, keyword-based methods to feed a modern AI, you are practically begging for hallucinations.

Let’s dismantle how we query enterprise data today and where AI-ready architectures are actually heading. Getting this wrong right now will derail your entire AI strategy.

The Limitation of Traditional ERP Queries

Databases in systems like Infor LN are phenomenal at what they actually do: precision and transactional integrity.

They are engineered to execute specific rules rapidly and flawlessly. If you know exactly what you are looking for, classic SQL is perfect. When you need the exact status of a specific production order, a simple SQL statement does the job. You target the tisfc001 table, map the primary keys, and you retrieve your exact record without ambiguity.

But historical analysis is rarely that surgically precise.

When a supply chain manager asks, “Why are we consistently late on this specific product line?”, a standard SQL query cannot answer that directly. It requires manually joining five different modules across purchasing, warehousing, and production. More importantly, it requires interpreting unstructured notes left by different people in different departments.

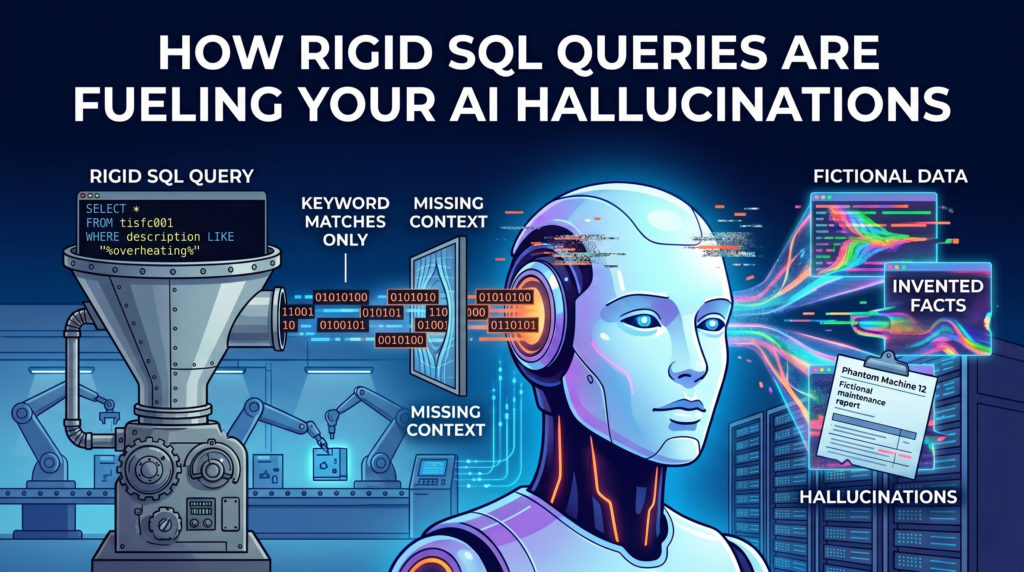

Imagine investigating a recurring machine failure and analyzing quality defects. You write a standard SQL query looking for WHERE description LIKE ‘%overheating%’. Your legacy database will obediently return exact matches only.

What does it miss? Everything else. It completely ignores critical records where a rushed operator typed high temperature, running hot, or burning components at the end of their shift.

Traditional SQL lacks semantic understanding. It only sees rigid characters. When you feed these incomplete, keyword-filtered results into an AI, the model draws conclusions based on a fractured reality. This is exactly where business insights go to die.

The Semantic Advantage

If you need to interrogate historical data looking for blurry correlations, a Vector Database provides a clear structural advantage.

Data Vectorization translates human text into numerical coordinates called embeddings. This groups data based on context and actual mathematical meaning. Think of an embedding as a GPS coordinate for a specific concept.

Instead of matching letters, the database matches the underlying meaning. If a planner types delayed by supplier and a warehouse operator logs waiting on parts, a classic SQL query sees two unrelated problems. A vectorized database recognizes they are the exact same issue.

In a vectorized database, the terms overheating and high temperature are mathematically placed right next to each other.

By offloading your transactional data into a vector-enabled database like Google AlloyDB, you stop matching strings and start matching meaning. This usually leverages stream pipelines to move data safely away from the ERP core.

You gain the ability to query years of historical data using natural language. The system understands intent. Users no longer need to memorize complex SQL syntaxes, navigate obscure tables, or guess older abbreviations.

The Right Architecture to Start With: RAG & Core Isolation

You might be tempted to apply Generative AI directly to your transactional ERP. Don’t do it. Doing so will severely degrade operational performance and risk locking up your core system.

LLMs are incredibly heavy. If you point a conversational AI directly at your live transactional tables, you will paralyze your daily operations. The warehouse employee trying to ship a truckload of goods should not have to wait for the system because an executive is asking the AI to analyze ten years of maintenance data.

The modern solution relies on Retrieval-Augmented Generation (RAG) and core isolation. A prime example is Infor’s transition from their legacy Coleman ML. They are currently utilizing LangChain to build agents capable of deep semantic reasoning, safely grounded in business logic.

The RAG workflow typically follows these operational steps:

- Offloading: You stream ERP data away from the transactional core. Using tools like Infor Data Fabric, you push this data into your vector database. This keeps your main system running smoothly.

- Retrieval: A user asks a question in natural language. The AlloyDB vector database performs a high-speed search to find conceptually relevant historical records.

- Extraction: Only these valid records are sent as a context of truth to an external Large Language Model like Vertex AI. The LLM processes this and generates a precise answer without ever touching the live ERP.

This RAG workflow is the correct foundation for any enterprise AI initiative right now. But treat it as a starting point, not a destination.

The industry is already moving toward Agentic Search: architectures where AI does not perform a single, static semantic lookup but instead decides autonomously how and where to search. It cycles through multiple retrieval strategies (vector search, structured queries, file systems, knowledge graphs) based on the complexity of the question.

Andrej Karpathy recently brought attention to a related pattern: the LLM Wiki. An AI agent pre-compiles raw source data into a structured, interlinked knowledge base. Instead of retrieving chunks of text each time, the agent queries a clean, organized artifact it has already built, compounding intelligence over time.

For most ERP environments today, a well-built RAG pipeline is the right move. But the context window constraints that made vector chunking mandatory are shrinking fast. If you design your architecture without any exit ramp toward agentic patterns, you will be updating it again in eighteen months.

Killing the Hallucinations

An AI hallucination occurs when an LLM generates output that is fluent, persuasive, but factually absurd. These models do not “know” facts; they just predict probabilities based on their underlying architecture.

In complex industrial environments, a hallucination is a critical failure. The AI might confidently invent a maintenance schedule that fits a statistical pattern but doesn’t exist in reality. It might suggest ordering parts that were discontinued a decade ago simply because they appeared frequently in old logs.

We cannot mathematically eliminate hallucinations, but we must reduce them until they are statistically irrelevant. Here are strict rules you must enforce:

- Require Strict Grounding: Force the LLM to rely exclusively on the RAG context provided by your vector database. Explicitly block its pre-trained internet knowledge to enforce strict enterprise grounding.

- Implement Zero Temperature: Set the model temperature to zero. Remove the creativity from the AI to ensure deterministic, highly repeatable outputs. We want surgical precision, not creative writing.

- Build a Mandatory Fallback: Through rigorous prompt engineering, force the LLM to state Data not found if the answer relies on data outside the retrieved context.

- Prioritize Data Quality: None of this advanced architecture matters if your source data is garbage. The ERP data flowing into the vector database must be clean and normalized.

Actionable Insights for IT Leaders

Stop chasing the hype. Simply buying an AI license does not fix a broken enterprise architecture. If your ERP is a mess of unstructured workarounds today, feeding it to an AI will only automate the confusion.

Combine the transactional precision of your ERP with the semantic understanding of a Vector Database, and you finally stop the AI from guessing.

Start by assessing your current data maturity before you buy any AI tools. Evaluate how your users are currently logging issues and whether those descriptions are standardized enough to be vectorized effectively.

Next, evaluate Vector DBs for historical analysis. Map your query intents, and above all, protect your core system by building a decoupled RAG architecture.Finally, design with an exit ramp. Build your retrieval layer as a modular component, not a monolith. When agentic patterns mature for enterprise use, you should be able to swap or extend your retrieval strategy without rebuilding everything from scratch.

If your ERP is still struggling to provide basic, clean data today, attaching a semantic AI on top will only make it fail faster.

If your data foundation is broken, AI will only automate your confusion at scale. Fix the architecture before you buy the license.

Written by Andrea Guaccio

May 5, 2026