Europe’s AI Game Changer: Why Small, Local AI can be the True Future of the Enterprise

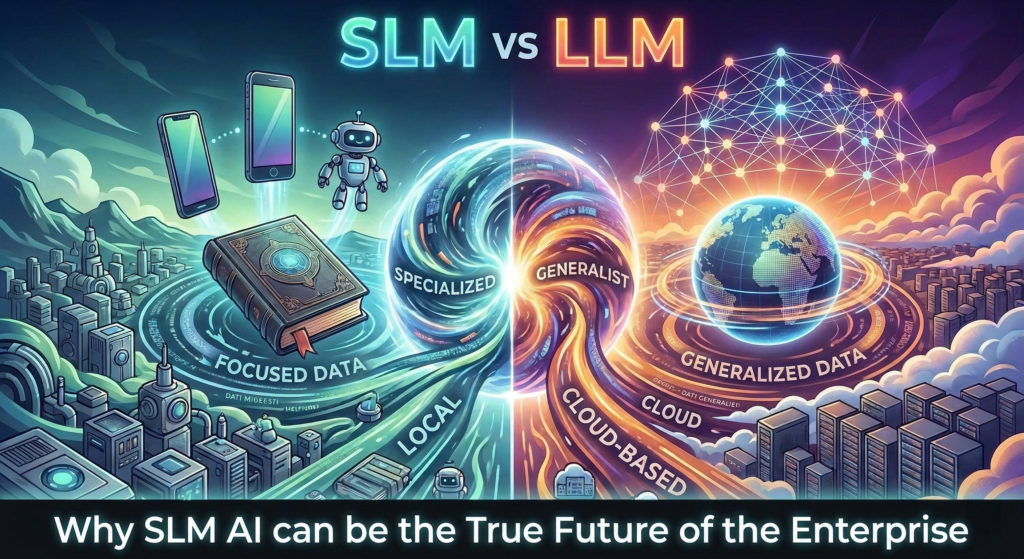

Everyone seems entirely focused on massive AI models with increasingly exaggerated parameters and progressively broader, universal capabilities. But the real enterprise revolution might actually be shrinking. If you want to protect your proprietary company data while getting actual ROI, you need to look closely at the potential of the new European AI wave.

Over the past few months, I have been writing about the intersection of artificial intelligence and complex enterprise architecture. We have explored the dangerous illusion of the “homebrew ERP” and discussed why the very people building AI often hesitate to trust it with mission-critical operations. The core issue always comes back to a fundamental mismatch between what generalist AI models are built to do and what a modern supply chain actually requires.

Recently, a piece published by Wired caught my attention. It highlighted a massive shift in the global artificial intelligence race, focusing on the rise of Small Language Models and the concept of “World Models” championed by pioneers like Yann LeCun.

This pivot towards specialized models addresses the exact challenges we navigate daily on the factory floor, a vision now backed by significant capital: LeCun’s new startup, AMI Labs, has just secured $1.03 billion in seed funding at a $3.5 billion valuation to build AI that truly understands the physical world.”

Generalist Large Language Models are fantastic for consumer applications. But dealing with industrial manufacturing, procurement logic, and warehouse material receipts demands a system inherently tailored to your specific business logic.

You require a system you actually own and crucially, one that requires a fraction of the hardware resources, drastically cutting down both your operational costs and energy consumption.

The European AI Renaissance: A Real Opportunity?

For a while, it seemed like the artificial intelligence race was exclusively dominated by massive tech giants across the ocean. Europe appeared to be lagging behind, bogged down by heavy regulations and a lack of hyper-scale computing infrastructure. However, the narrative could be on the verge of a major shift. Europe now has a real opportunity to recover its lost ground by entirely changing the rules of the game.

Instead of trying to build bigger and more expensive generalist models, European startups are focusing on efficiency, data sovereignty, and specialized applications. Companies like Mistral in France have proven that you can build highly capable models that rival the giants using a fraction of the parameters. Aleph Alpha in Germany is making massive strides by focusing specifically on B2B environments where data security and transparent decision-making are non-negotiable. Research labs like Kyutai are pushing the boundaries of open science in AI.

These companies are actively marching forward with a completely different philosophy. They are proving that the future of enterprise technology might not require a monolithic, trillion-parameter brain sitting in a distant data center.

Small Language Model: Definitions and Mechanics

This brings us to the concept of the Small Language Model. An SLM is exactly what it sounds like, but the mechanics behind it are fascinating. While massive models operate with over a trillion parameters (the internal variables or “knobs and dials” that determine how a neural network processes information), an SLM typically runs on fewer than 10 billion parameters, usually ranging between 1 and 7 billion.

But small does not mean simple. SLMs achieve incredible performance through specific engineering techniques:

- Knowledge Distillation: Smaller “student” models are trained to mimic the outputs of massive “teacher” models, retaining core capabilities at a fraction of the size.

- High-Quality Data: Instead of scraping the entire noisy internet, SLMs are trained on highly curated, textbook-quality datasets.

- Quantization: This process compresses the model’s memory footprint so drastically that a highly capable model can literally run on a standard laptop or a single local server.

Why could this be a real game changer for your business? The answer comes down to cost, focus, and ownership.

Crucially, adopting an SLM does not mean building one from the ground up. You download a pre-trained model that already understands language, and then you fine-tune it with your domain-specific data. Think of it like hiring an employee who already speaks the language perfectly and simply teaching them your company’s internal operating procedures.

Training and running a massive LLM requires staggering amounts of computational power and financial investment. Most companies cannot afford to train their own from scratch, which forces them to rent access via APIs. This creates a massive bottleneck for industrial applications.

As a Senior Logistic Consultant working with systems like Infor LN, I deal with highly sensitive data every single day. We manage complex Bill of Materials, exact pricing structures, quality inspection parameters, and proprietary manufacturing workflows.

Sending this highly sensitive, highly proprietary data to a third-party cloud to be processed by a generalist AI is a security risk. Many CEOs and CFOs flatly refuse to do it, and rightfully so. This is the exact reason I wrote about The AI Exodus and why builders do not trust the building.

An SLM solves this problem brilliantly. Because of the optimizations mentioned earlier, an SLM can run locally on your own servers or within your secure private cloud. It means your company actually possesses the intelligence. The data never leaves your secure perimeter. You get the benefits of advanced machine learning without compromising your intellectual property, all while consuming significantly less electricity.

For a deeper visual breakdown of how these models function and why they are so effective for specialized tasks, I highly recommend watching this excellent explanation from Salesforce AI Research Lab.

World Models and the Physical Reality of Logistics

The Wired piece also touched upon a concept that is critical for anyone working in manufacturing and supply chain management. Yann LeCun, a leading figure in AI, has been vocal about the limitations of current LLMs. He argues that these models simply predict the next logical word in a sequence. They possess zero understanding of the physical world.

If you ask a generalist LLM to optimize a cross-docking material flow in a warehouse, it might give you a response that sounds grammatically perfect but is physically impossible to execute.

LeCun advocates for “World Models.” These are systems designed to understand the physical constraints and realities of the environment they operate in. In our industry, the “world” is the factory floor. It is the physics of a vertical warehouse, the rigid sequence of a production routing, and the hard limitations of inventory.

When you combine the localized, secure nature of a Small Language Model with the specific, deterministic logic of a robust ERP system, you create something incredibly powerful. You are taking an SLM, training it on your specific historical ERP data, and using it as a highly specialized agent that actually understands the physical and logistical constraints of your specific company.

This is why the SaaS-pocalypse I mentioned in my previous articles is simply wrong. The core deterministic SaaS platforms will remain the backbone of the enterprise. Localized SLMs could serve as the secure, specialized brains that interact with them.

Actionable Insights for Enterprise Leaders

If you are a CEO, CFO, or IT leader looking to integrate artificial intelligence without risking your data or your budget, here are the practical steps you should consider right now.

- Audit Your Data Infrastructure: Small Language Models require high-quality data to be effective. Before you invest in any local AI solution, ensure your ERP data is clean. In my experience with data migration and system analysis, companies severely underestimate how much legacy garbage exists in their databases. Clean your house first.

- Shift Focus from Size to Specificity: stop worrying about which tech giant has the model with the most parameters. Start evaluating smaller, open-weight models that could be fine-tuned specifically for your industry vertical.

- Evaluate Local Deployment Capabilities: speak with your IT about the hardware required to run an SLM locally. The costs of local inference hardware are dropping rapidly. Calculate the ROI of a one-time hardware investment for a local model versus the recurring, unpredictable costs (and energy consumption) of API tokens from a cloud provider.

- Identify Narrow Use Cases: do not try to automate your entire supply chain at once. Pick a specific, highly repetitive task. Use a localized SLM to assist with matching purchase orders to complex supplier invoices or analyzing Quality Management Non-Conformance Reports. Prove the value locally before scaling.

- Get Your Hands Dirty: like any emerging technology, reading about it is never enough. You must actively test and evaluate these solutions across different scenarios and various models. If you do not start experimenting and building proof-of-concepts, you will never truly grasp the tangible value of these models for your operations. You simply have to get your hands dirty.

The True Power Might Just Be Local

Europe might have missed the first explosive wave of generalist consumer chatbots, but the enterprise AI race is a marathon. By focusing on Small Language Models, data sovereignty, and systems that understand the physical world, the European tech ecosystem is positioning itself to provide exactly what industrial companies actually need.

Ultimately, when you look at the real bottlenecks in your business, you will realize you don’t need an AI that knows everything about the world. You just need one that knows absolutely everything about your specific operations, running securely behind your own firewall.

That could be the true future of enterprise technology.

Written by Andrea Guaccio

March 24 2026