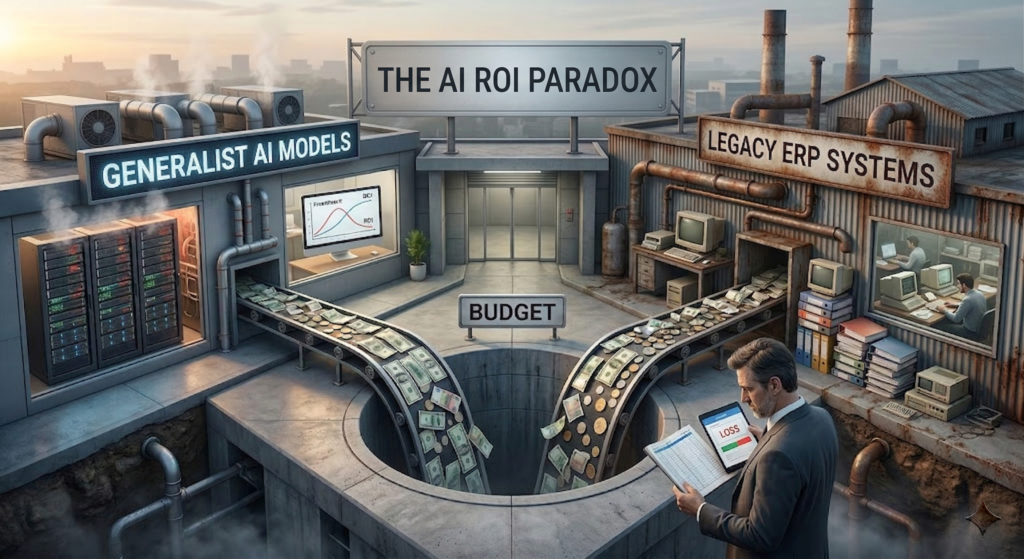

The AI ROI Paradox: why Generalist Models and Legacy ERPs Will Bankrupt Your Budget

In October 2025 Deloitte published a report confirming what every consultant should already know for quite some time. Companies are pouring billions of dollars into Generative Artificial Intelligence, but the promised Return on Investment remains still quite elusive.

We expected AI to instantly automate our enterprise systems and drastically reduce operational costs. Instead, executives are looking at skyrocketing software bills, stalled pilot programs, and a distinct lack of measurable business value. The market is clearly still confused, and the recent panic surrounding SaaS business models reflects this deep uncertainty.

If your company is struggling to find the ROI in your new AI initiatives, the problem does not lie with the concept of artificial intelligence itself. The problem lies on the factory level mostly.

In my opinion, we are currently witnessing a huge architectural mismatch. Organizations are making two mistakes simultaneously: they are buying the wrong type of intelligence, and they are trying to plug it into the wrong type of software.

Let me break down exactly where I see your AI budget bleeding and how I believe you can reverse the trend by rethinking your entire approach to enterprise systems.

Delusion of the Universal Brain

Over my recent experiences, I have noticed a recurring, dangerous instinct: the urge to chase the biggest, most famous AI models on the market. From my perspective, most people seem entirely focused on generalist Large Language Models (LLM) equipped with trillions of parameters and progressively broader, universal capabilities.

Don’t get me wrong, I think these massive generalist models are undeniably fantastic for a lot of consumer applications.

However, my vision of the future of enterprise tech is quite different. In my daily work I constantly see that these areas demand a completely different set of capabilities.

I strongly agree with AI pioneer Yann LeCun when he points out that these massive LLMs possess zero inherent understanding of the physical world. They are just probabilistic engines designed to predict the next logical word. In my view, it makes absolutely no sense to keep chasing models with more and more parameters if your goal is to execute highly specific business logic.

If you ask a generalist LLM to optimize a cross-docking material flow in a busy warehouse, or to recalculate a multi-level Bill of Materials based on a sudden component shortage, I can guarantee you are asking for trouble. It might generate a response that sounds grammatically flawless and incredibly confident, but I’ve seen firsthand how that same response will likely be physically impossible to execute or financially disastrous.

I believe the true ROI in enterprise software does not reside in trillion-parameter generalist knowledge. It resides in hyper-specialization.

I see the answer to this specific waste of resources clearly: the Small Language Model (SLM). An SLM typically operates on fewer than 10 billion parameters and is trained on highly curated, textbook-quality datasets. Instead of knowing a little bit about everything on the internet, an SLM is designed to know absolutely everything about one specific domain.

If a company considers deploying a localized SLM, they literally take ownership of the intelligence. Your proprietary data never leaves your secure perimeter. You gain access to a highly specialized agent that understands the physical and logistical constraints of your specific operations, all while consuming a fraction of the computational power and API costs required by the cloud giants.

The Trap of the Customized Chassis

Acquiring the correct, specialized AI model only solves half of the equation. The second major reason why AI returns are so elusive is the environment where this intelligence is being deployed.

As I detailed extensively in my previous article, “The AI Killer: Why Dirty Data Will Bankrupt Your Agent“, there is a harsh reality killing enterprise AI: you cannot drop a highly tuned Ferrari engine into a rusted, thirty-year-old chassis and expect a miracle.

Historically, companies wore their heavily customized, on-premise ERP systems as a badge of honor. Today, that extreme customization is a technological prison inherently filled with dirty data.

Because AI learns exclusively from historical patterns, feeding it ten years of obsolete legacy data just teaches it to scale your inefficiencies at lightspeed. This garbage in, disaster out cycle is exactly why companies are paying premium prices for AI software but seeing zero ROI.

Actionable Insights for Enterprise Leaders

If you are a CEO, CFO, or IT leader tasked with justifying your current AI budget or planning your next ERP migration, here are the practical steps you must take to secure a measurable return on your investment.

1. Convert Your Core Customizations: stop treating your ERP like a bespoke piece of tailoring. Actively convert legacy customizations into modern extensibilities. While AI can technically read custom tables, it lacks the semantic pre-training to understand your unique fields, leading to costly re-training cycles or hallucinations. By moving custom logic to external API-driven extensions, your core database remains standard and instantly understandable to the AI.

2. Shift Your Focus to Small Language Models: stop worrying about which massive tech giant has released the model with the most parameters this week. Begin evaluating open-weight models that can be fine-tuned specifically for your industry vertical. Calculate the ROI of hosting an SLM in your own controlled environment (whether on dedicated local hardware or a private cloud infrastructure like AWS) against the unpredictable, recurring costs of renting generalist API tokens.

3. Execute a “Clean Cut” Data Migration: before you launch any AI pilot program, you must clean your house. When migrating to a modern Cloud ERP, be utterly ruthless with your historical data. Do not migrate fifteen years of closed invoices or dead supplier codes. Archive that information in a separate Data Lake and use Generative BI to query it when necessary. Bring only your active master data, open transactions, and current inventory balances into the new system. A high signal-to-noise ratio is the fundamental prerequisite for AI accuracy. If you want a dive deep into the topic, start from here: The Migration Playbook.

My Final Take

The ROI paradox highlighted by Deloitte serves as a highly necessary wake-up call for the entire tech industry. It reminds us that simply purchasing a subscription to the latest trending software does not magically transform an organization into an autonomous powerhouse.

True enterprise intelligence requires profound architectural discipline. The market will undoubtedly see a purge of companies that try to force generalist AI to fix their broken legacy processes. However, the organizations that combine the hyper-specialized focus of Small Language Models with the standardized agility of Cloud ERP Extensibility will finally stop chasing the hype. They will build the foundation required to generate real, compounding value on the factory floor.

Written by Andrea Guaccio

March 28 2026